TL;DR

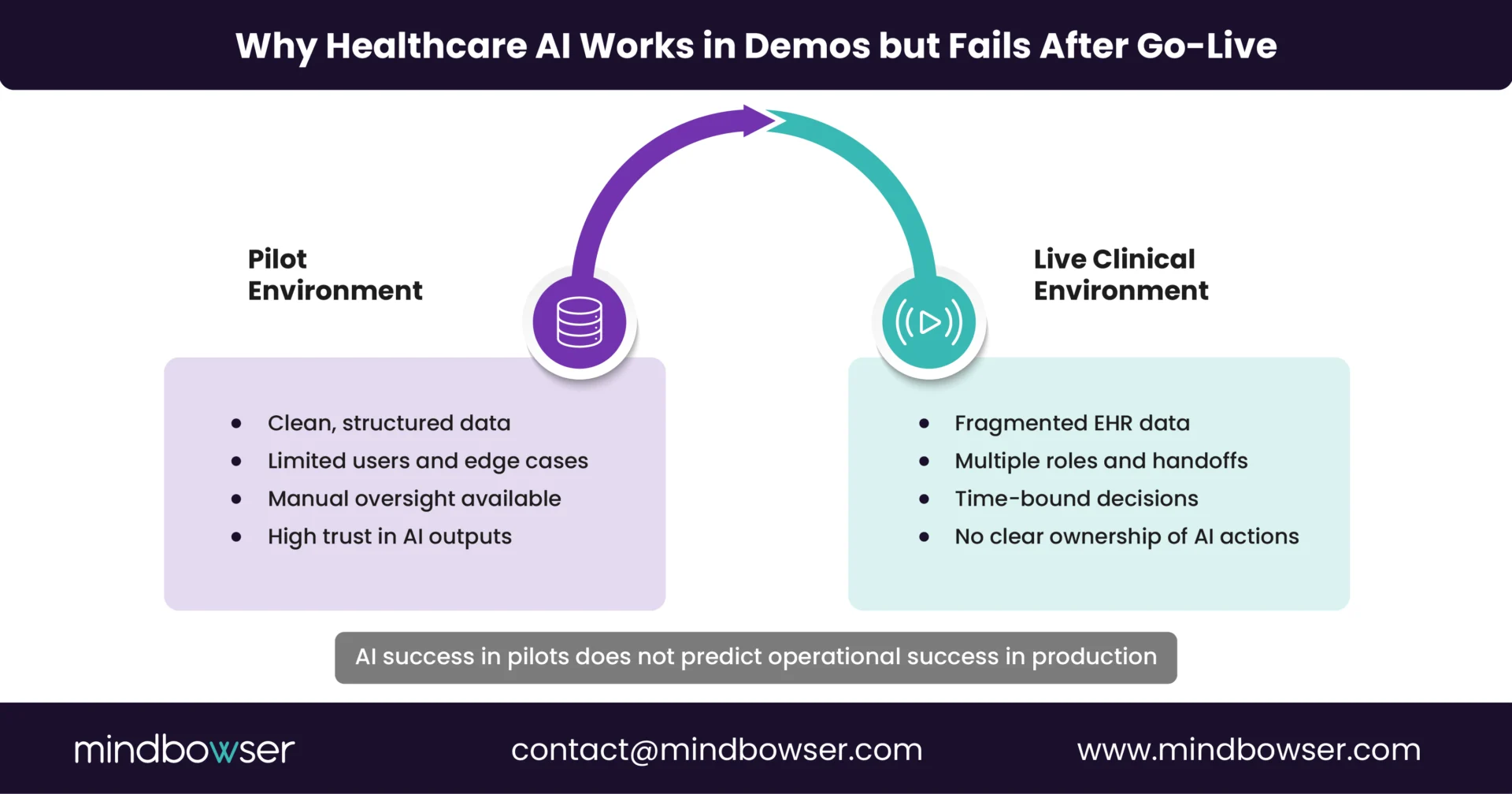

- Most healthcare AI initiatives appear successful in pilots but fail after go-live due to fragmented EHR integrations and fragile workflows.

- AI agents fail when they operate outside real clinical workflows or rely on partial, non-contextual data from downstream systems.

- Interoperability is not a data problem alone; it is a workflow, ownership, and operational accountability problem.

- Sustainable AI in healthcare requires workflow-first design, EHR-native integration, and clear guardrails around automation, access, and auditability.

If healthcare AI agents are getting smarter, faster, and more capable, why do so many of them struggle once they move beyond pilots and proofs of concept?

On paper, the case for AI agents is compelling. They can summarize charts, automate care coordination tasks, flag risks earlier, and reduce administrative load on clinical teams. During demonstrations and limited pilots, these agents appear to function as promised.

The problem shows up after go-live.

Once AI agents are embedded into real clinical environments, they encounter fragmented EHR integrations, inconsistent data flows, and workflows that were never designed to support autonomous or semi-autonomous systems. At that point, adoption drops, workarounds emerge, and teams quietly stop trusting the outputs.

This is not an issue with AI capabilities.

It is an issue in interoperability and workflow design.

I. Where Do AI Agents Actually Break After Go-Live?

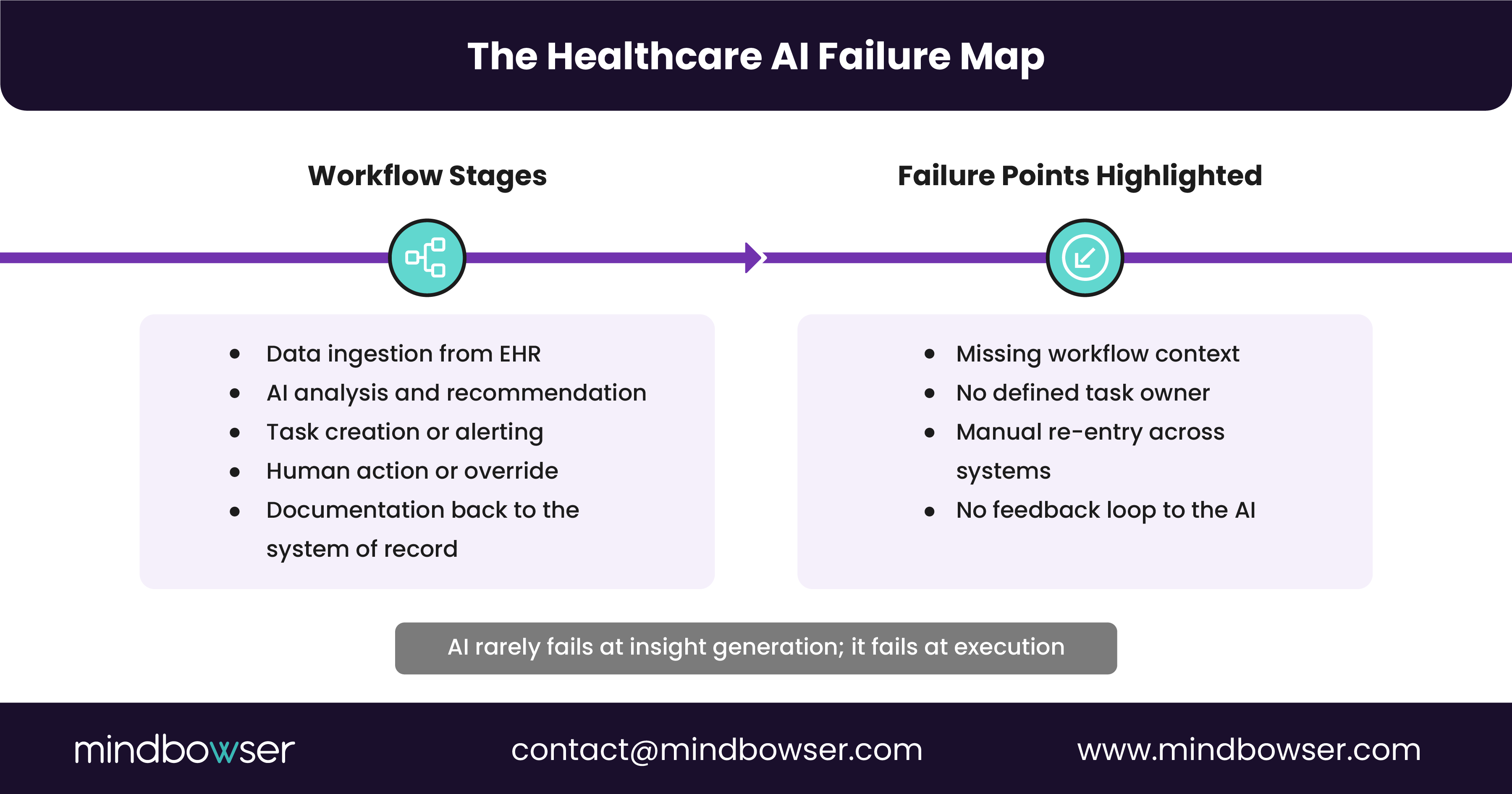

Most healthcare AI failures do not happen at the model level. They happen at the point where AI agents are expected to operate inside live clinical and operational workflows.

Based on real implementation conversations, the breakdown typically shows up in three places.

A. AI Agents Sit Outside the EHR, Not Inside the Workflow

Many AI agents are deployed as external tools that pull data from the EHR but do not live within clinician workflows.

That creates friction immediately.

- Clinicians are forced to switch contexts between systems

- AI recommendations arrive without the surrounding clinical context

- Outputs are delivered after decisions have already been made

When AI agents are not embedded directly within EHR-native workflows, they are at best advisory and at worst ignored.

B. Interoperability Is Treated as a Data Pipe, Not a Workflow Contract

Most implementations assume that if data can move via FHIR, HL7, or APIs, the problem is solved.

In reality:

- Data arrives late, incomplete, or without ownership

- Downstream systems interpret the same data differently

- No one is accountable for what happens after the AI produces an output

AI agents do not fail because data is unavailable. They fail because no one defines how their outputs should be acted on, by whom, and within which system.

C. Automation Without Guardrails Breaks Trust Fast

AI agents are often introduced with the promise of automation, but without clear guardrails.

That leads to:

- Unclear access control and role-based permissions

- Limited auditability of AI-driven actions

- Risk teams stepping in after issues surface, not before

Once clinicians and compliance teams lose confidence in how AI decisions are made or logged, adoption drops rapidly.

The common thread across all three failure points is that AI agents are being layered onto existing systems without redesigning the workflows they are intended to support.

II. Why Interoperability Alone Does Not Fix Healthcare AI Failures

Interoperability is often positioned as the cure for healthcare AI breakdowns. If systems can exchange data cleanly, AI agents should work reliably.

In practice, that assumption does not hold.

Most healthcare environments already have interoperability in place. Data flows among EHRs, care management platforms, CRMs, and analytics tools daily. Yet AI agents still fail after go-live.

Here is why.

A. Data Movement Does Not Equal Workflow Alignment

FHIR APIs and HL7 interfaces move data, not intent.

AI agents need more than patient data. They need to understand:

- Where in the workflow a decision is being made

- Who owns the next action?

- What constraints exist based on role, policy, or timing

Without this context, AI outputs arrive disconnected from real operational decision points.

B. Interoperability Breaks at the Edges

Most integrations are designed for system-to-system communication rather than for autonomous agents operating across systems.

This creates gaps such as:

- AI agents generating insights in one platform that must be manually re-entered into another

- No feedback loop when AI recommendations are accepted, modified, or ignored

- Limited visibility into the downstream impact

Over time, these gaps create silent failure. The AI continues to run, but no one uses it.

C. Compliance and Auditability Are Bolted On Too Late

When interoperability is treated purely as a technical layer, compliance becomes an afterthought.

That leads to:

- Incomplete audit trails for AI-driven decisions

- Unclear data provenance when multiple systems contribute inputs

- Delayed security and access reviews

In regulated environments, programs may stall or be rolled back.

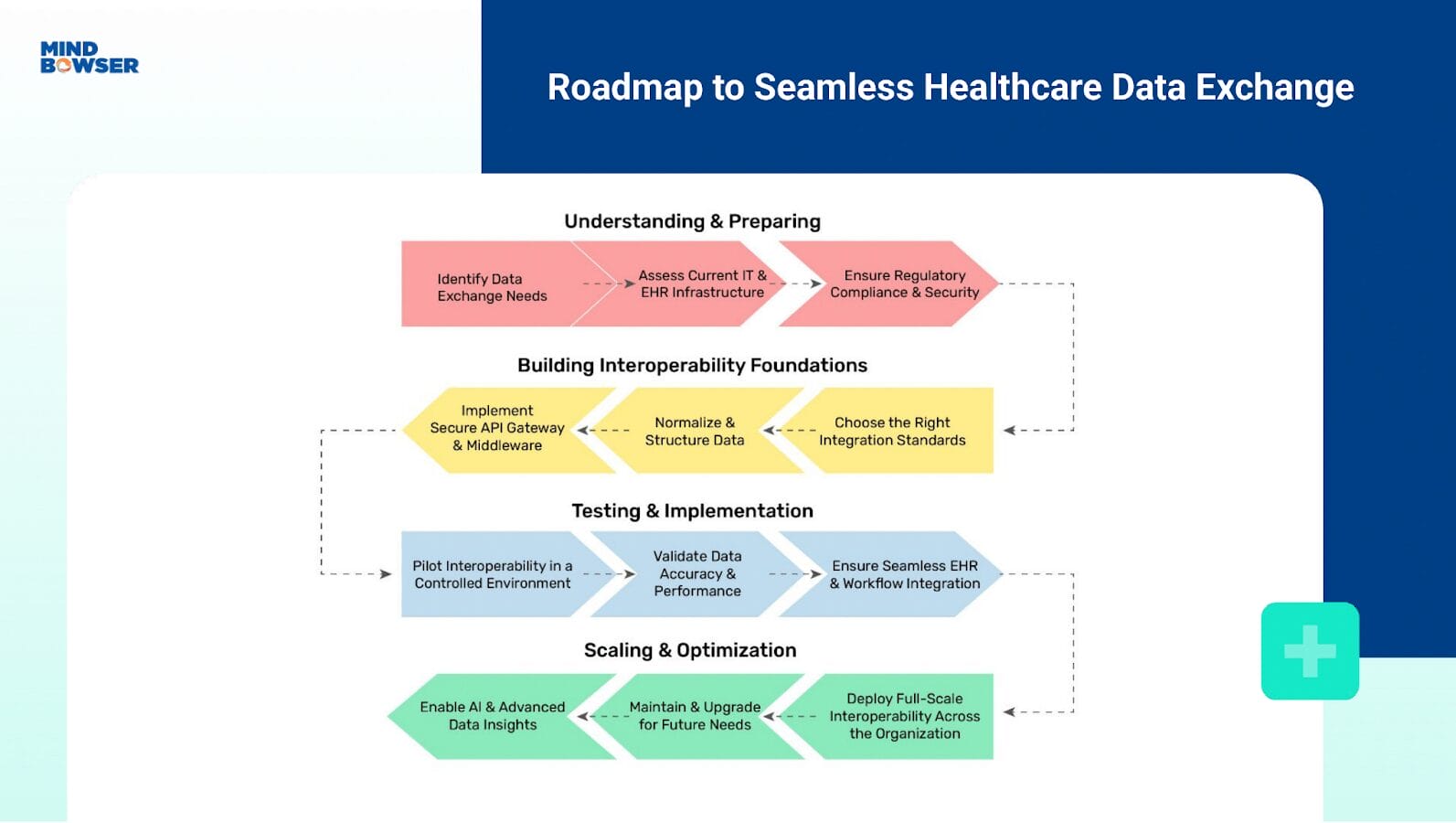

The takeaway is simple. Interoperability is necessary, but it is not sufficient. Without workflow ownership, operational accountability, and compliance-first design, AI agents cannot scale safely or sustainably.

Scale Healthcare AI Beyond Pilots with Workflow-First Execution

III. What a Workflow-First AI Implementation Looks Like in Healthcare

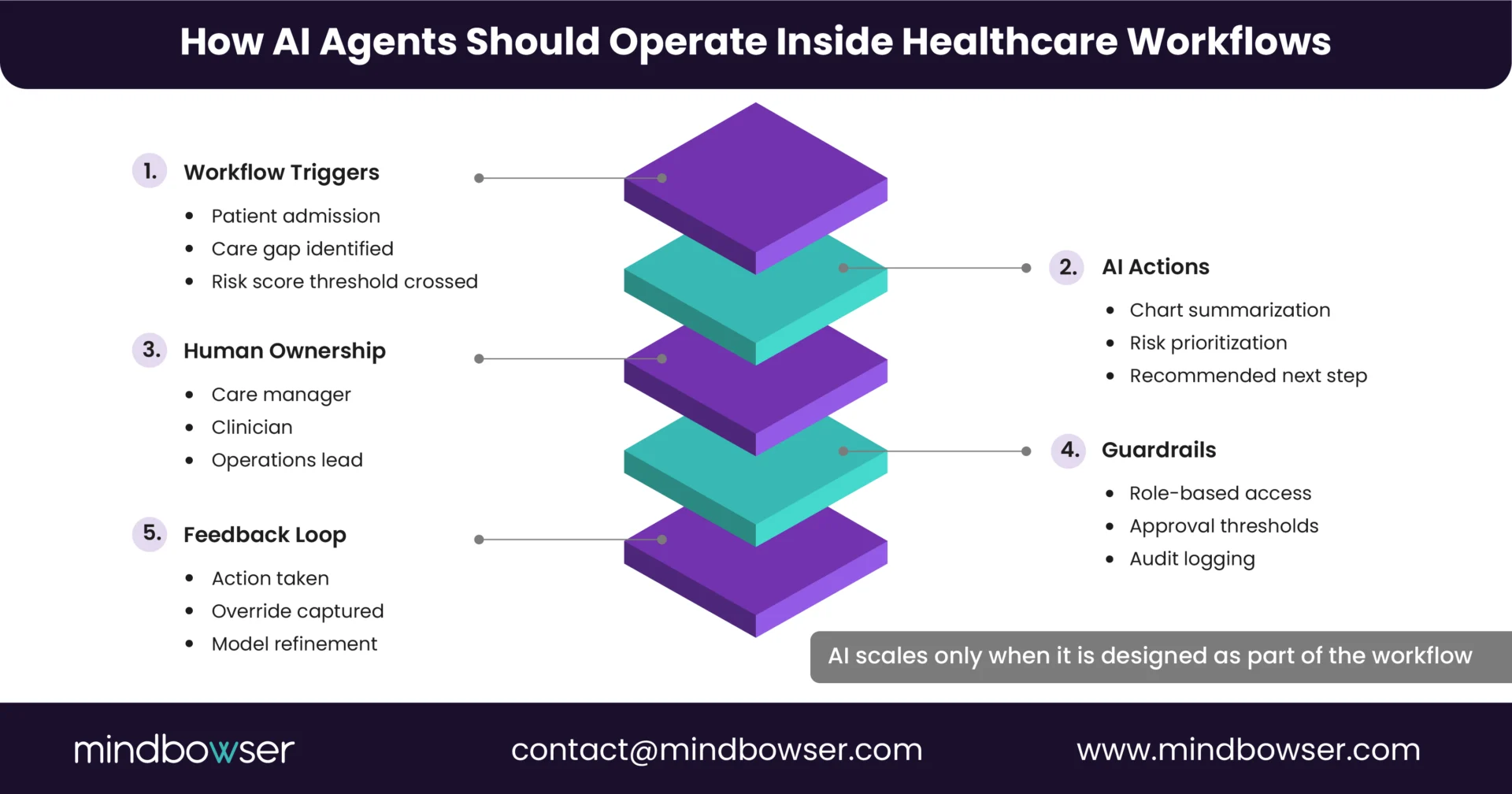

AI agents succeed in healthcare when they are designed around workflows first, not models or integrations.

In successful implementations, AI is treated as an operational participant rather than a standalone capability.

Here is what that looks like in practice.

A. AI Is Anchored to Specific Workflow Moments

Instead of deploying broad AI capabilities, teams define where AI can safely and reliably add value.

For example:

- Pre-visit chart review and summarization before clinician handoff

- Care gap identification during care manager outreach

- Risk stratification tied to daily population health queues

Each AI action is mapped to a clear trigger, owner, and downstream step inside the existing workflow.

B. EHR-Native or EHR-Adjacent by Design

Workflow-first implementations minimize context switching.

That means:

- AI outputs surface inside the EHR or care management system that clinicians already use

- Recommendations align with existing task lists, inboxes, or queues

- Documentation and updates flow back into the system of record automatically

When AI is embedded where work already occurs, adoption follows naturally.

C. Defined Guardrails, Not Open-Ended Automation

Automation is introduced selectively.

Effective teams define:

- Which actions AI can recommend versus execute

- Role-based access and approval thresholds

- Full audit logs for every AI-assisted decision

This builds trust with clinicians, compliance teams, and leadership from day one.

D. Feedback Loops Are Built In

AI agents improve only when feedback is captured.

Workflow-first designs ensure:

- Clinician actions feed back into model tuning

- Exceptions and overrides are tracked, not ignored

- Performance is measured against operational KPIs, not demo success

This turns AI from a static tool into a continuously improving system.

The difference between AI that scales and AI that stalls is not sophistication. It is aligned with how care is delivered in practice.

IV. Key Takeaways for CIOs and CTOs Scaling Healthcare AI

Most healthcare AI initiatives do not fail because the technology is immature. They fail because the organization underestimates the operational complexity of deploying AI inside regulated, workflow-heavy environments.

From real-world implementations, a few patterns are clear.

- AI agents break after go-live when they are layered onto fragmented workflows instead of being designed into them

- Interoperability enables data exchange, but without workflow ownership and accountability, AI outputs go unused

- EHR-native or EHR-adjacent delivery is critical for adoption and trust

- Compliance, auditability, and access control must be designed upfront, not retrofitted

- Sustainable AI programs measure success by workflow impact, not pilot performance

For CIOs and CTOs, the question is no longer whether AI can work in healthcare. It is whether the organization is ready to support AI operationally.

V. Next Step: De-Risk AI Before You Scale

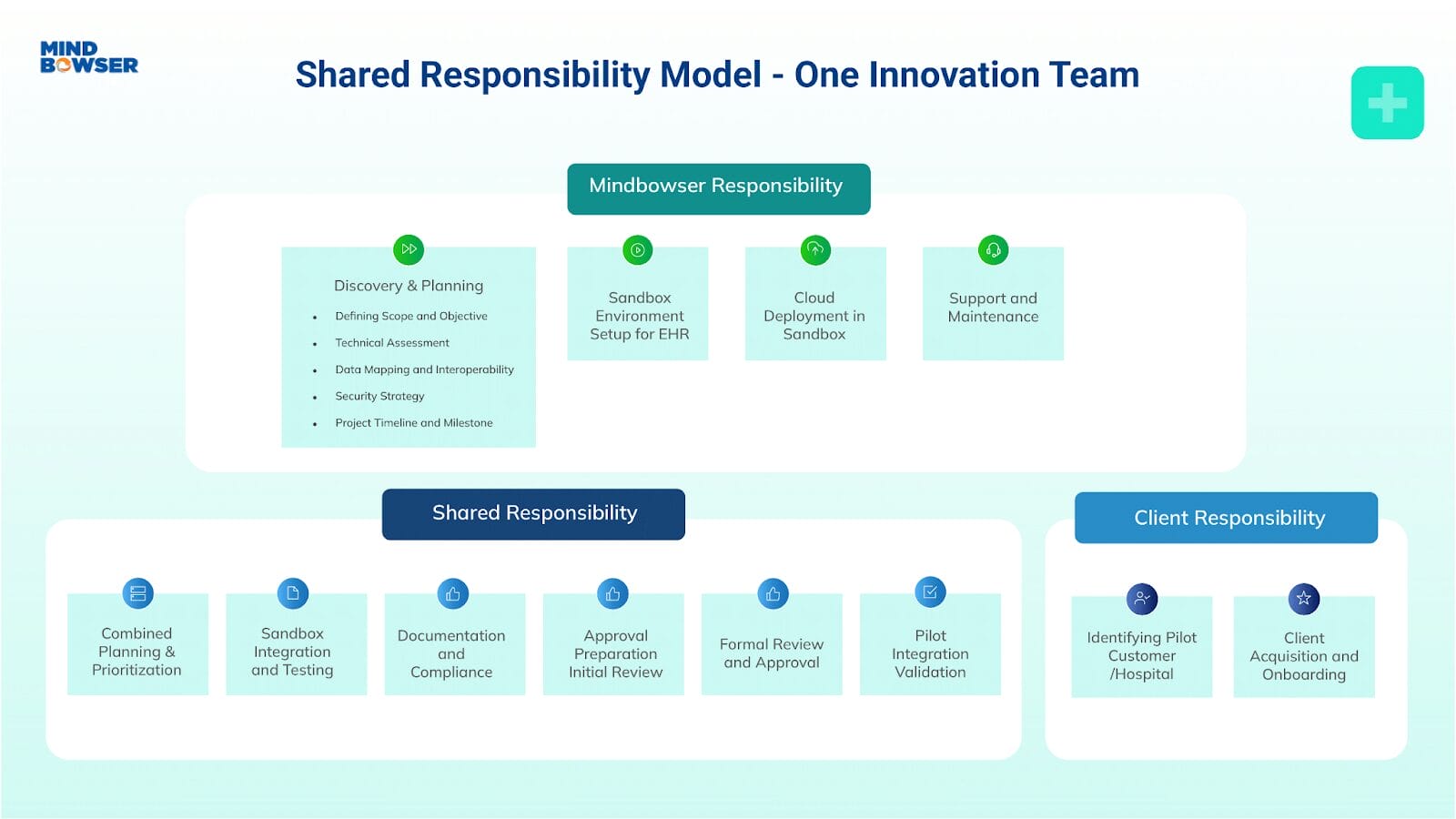

Before expanding AI agents across care coordination, population health, or clinical operations, most teams benefit from a structured assessment.

That typically includes:

- Mapping AI use cases to real clinical and operational workflows

- Identifying interoperability gaps that affect execution, not just data access

- Defining compliance guardrails, ownership, and audit requirements

- Pressure-testing AI outputs inside EHR workflows before full rollout

This approach reduces rework, preserves clinician trust, and accelerates time-to-value.

If you want to pressure-test your AI roadmap against real-world workflow and interoperability constraints, that conversation usually surfaces issues pilots never reveal.

Most AI governance challenges emerge when agents span clinical, operational, and population health workflows, requiring centralized ownership, clear escalation paths, and shared accountability models across IT, compliance, and care teams.

Even well-integrated AI agents fail without structured onboarding, role-specific training, and clear communication on how AI outputs should influence daily decisions.

Post–go-live ROI should be tied to workflow outcomes such as time-to-action, reduction in manual follow-ups, documentation turnaround, and avoidance of operational rework, rather than to model accuracy or usage dashboards.

The decision depends on workflow criticality, regulatory exposure, and risk tolerance, with most organizations starting in advisory mode and expanding autonomy only after auditability and trust are established.

Without clearly defined boundaries between AI recommendations and human judgment, organizations risk ambiguity in clinical responsibility, making upfront legal and compliance alignment essential.

BLOGS

BLOGS  NEWSROOM

NEWSROOM  CASE STUDIES

CASE STUDIES  WEBINARS

WEBINARS  PODCASTS

PODCASTS  ASSET HUB

ASSET HUB  EVENT CALENDAR

EVENT CALENDAR