TL;DR

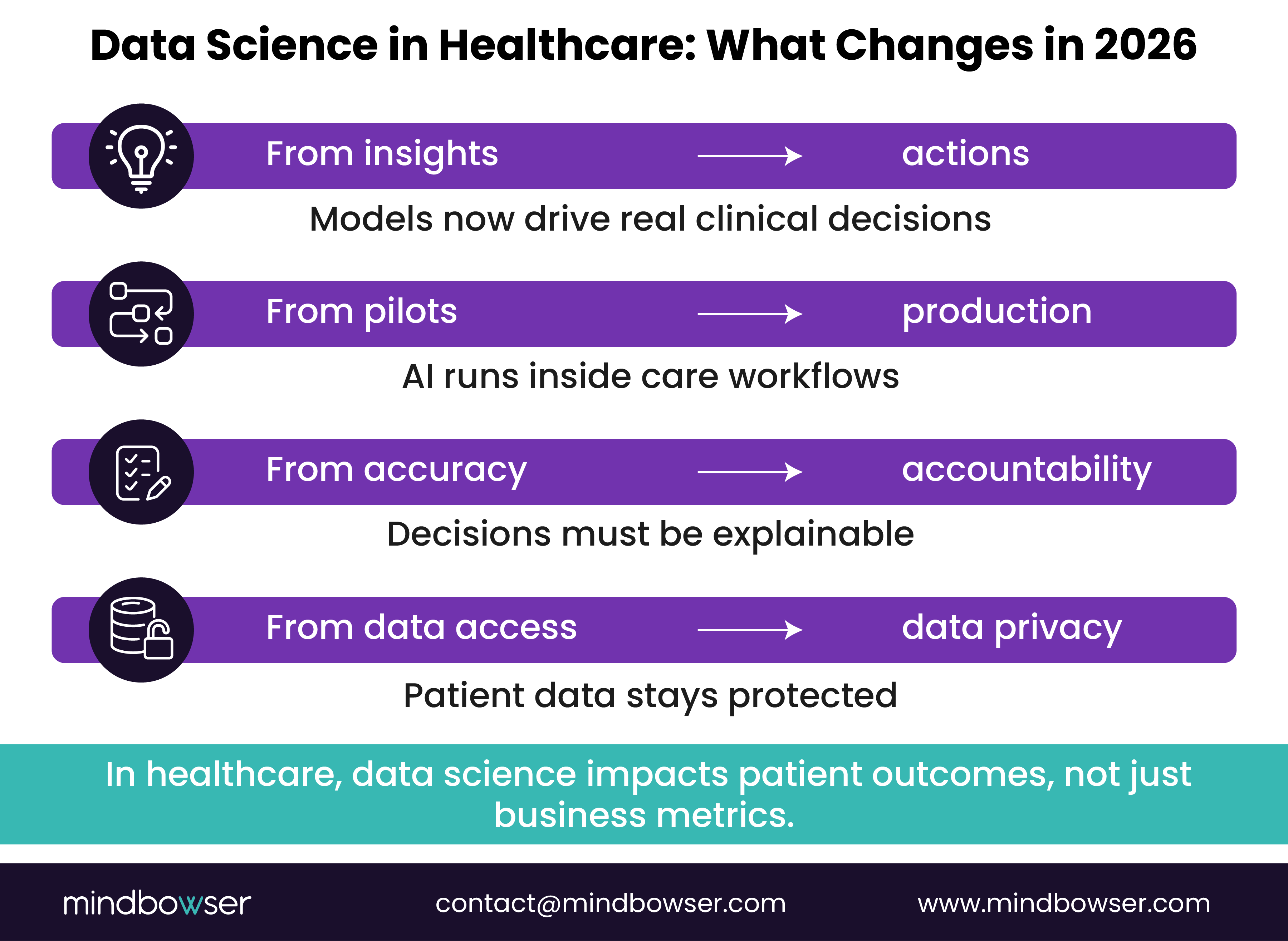

- Data science has shifted from experimentation to real-world execution, especially in healthcare, where decisions impact patient outcomes.

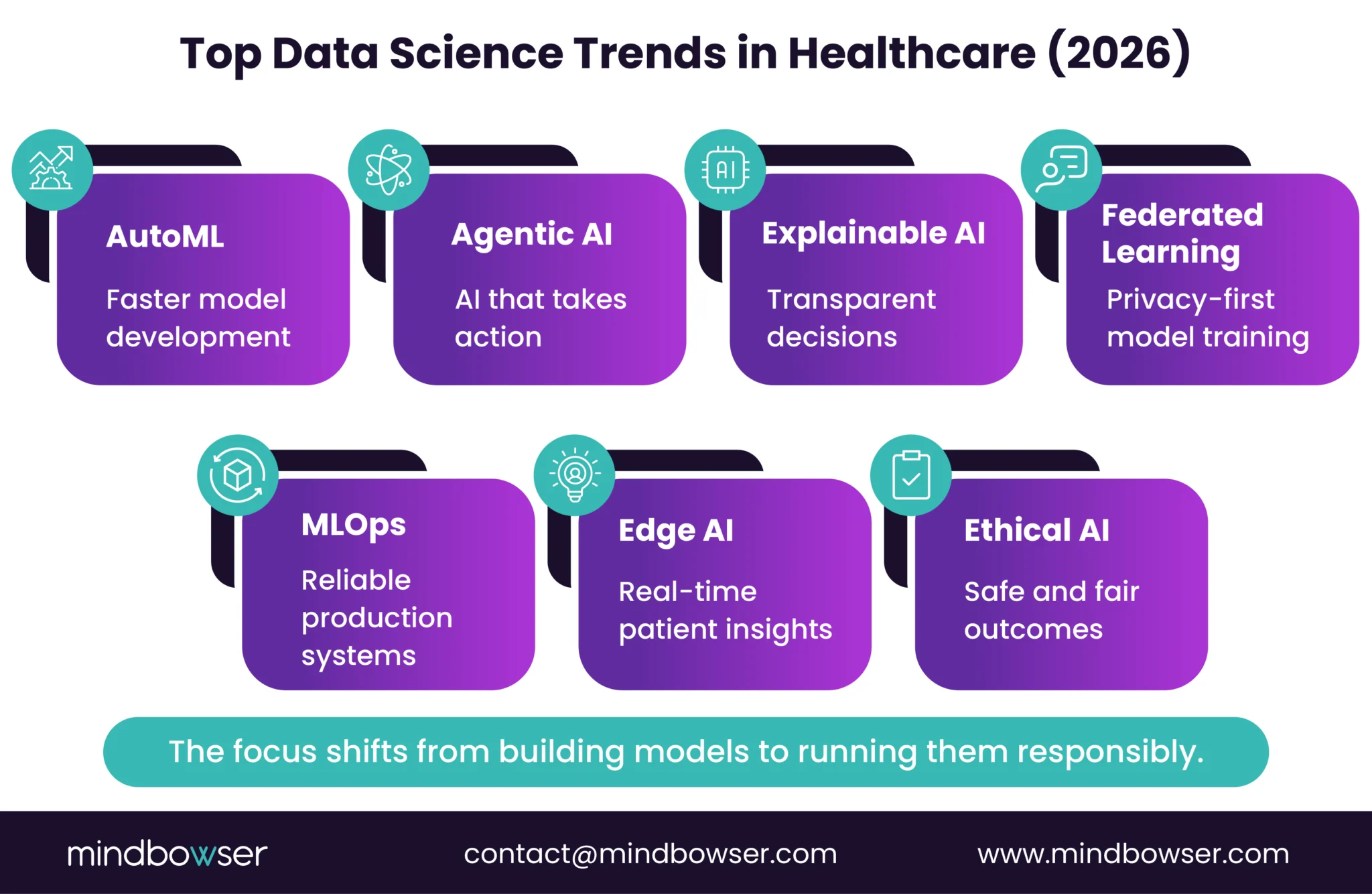

- Trends like AutoML, agentic AI, explainable AI, federated learning, and MLOps are helping organizations move faster while staying compliant and trustworthy.

- The focus is no longer on building models, but on deploying them responsibly within clinical workflows, ensuring transparency, privacy, and measurable impact.

- Teams that treat data science as core infrastructure, not a side project, will lead in efficiency, outcomes, and growth.

“What happens when your largest customer, regulator, or board asks you to explain exactly how your AI made a decision?”

In 2026, that question defines whether data science creates trust or risk.

Data science now sits at the core of clinical outcomes, care delivery efficiency, and healthcare innovation. Healthcare leaders are expected to move fast, deploy AI in production, and prove that models are accurate, secure, explainable, and aligned with clinical workflows.

The investment surge makes this clear. Global AI spending reached $244B in 2025 and is projected to hit $632B by 2028, according to IDC.

This article breaks down the data science trends that matter most in 2026, with a practical lens on what actually scales in healthcare environments where compliance, patient safety, and outcomes matter.

Bottom line: data science is no longer experimental. It is an operational infrastructure.

I. The Data Science Trends Defining 2026

A. AutoML Becomes the Default Entry Point for Machine Learning

“Speed is the new skill shortage.”

In 2026, AutoML is no longer just a productivity boost for data teams. It is becoming the default way healthcare organizations start and scale machine learning.

This is especially important for healthcare organizations that need to deploy predictive models without building large in-house data science teams.

AutoML platforms now automate data preparation, feature engineering, model selection, tuning, and deployment. What once took weeks of manual effort can now be done in days, sometimes hours.

The market growth reflects this shift. The AutoML market grows from $2.35B to $10.93 by 2029, a 47 percent CAGR, according to Research and Markets.

1. Why AutoML is accelerating

- Data science talent is scarce and expensive

- Business teams want faster experimentation

- Time-to-production matters more than model perfection

AutoML eliminates repetitive work, allowing teams to focus on higher-impact problems.

2. Where AutoML delivers value

i. In healthcare

- Faster development of risk, triage, and prediction models

- Reduced dependency on large in-house data science teams

- Quicker validation of use cases like readmission and utilization risk

ii. In SaaS

- Rapid testing of churn and pricing models

- Easier rollout of ML features across products

- Faster iteration without rebuilding pipelines

The leadership reality

AutoML does not eliminate the need for governance. Models still require validation, monitoring, and ethical oversight, especially in regulated environments.

AutoML is not about convenience. It is about speed, consistency, and getting reliable models into production faster.

B. Agentic AI Turns Insights Into Autonomous Action

“Insights don’t scale. Execution does.”

Agentic AI is one of the most important shifts in data science for 2026. Instead of generating predictions and stopping there, agentic systems plan, decide, and execute multi-step tasks on their own.

These AI agents can break down goals, interact with tools and data sources, and adjust actions based on outcomes. The result is a move from decision support to decision execution.

Why agentic AI matters now

- Too many insights never lead to action

- Manual handoffs slow down operations

- AI is expected to run, not just recommend

Agentic AI closes the gap between analysis and outcomes.

Real-world impact

i. In healthcare

- Automated care coordination and follow-ups

- Dynamic prioritization of high-risk patients

- Reduced administrative burden across clinical and administrative teams

ii. In SaaS

- Similar workflow automation patterns in customer operations

- Revenue operations that react in near real time

- Product intelligence that triggers action, not reports

iii. What leaders must control

Agentic systems amplify both value and risk:

- Guardrails are required to prevent over-automation

- Audit trails must be built in from day one

- Human override remains essential in regulated settings

Agentic AI changes what data science delivers. In 2026, the goal is not better predictions. It is a better execution.

C. Explainable AI Becomes a Buying Requirement

“If you can’t explain the decision, you can’t defend it.”

That is now the unspoken rule in enterprise AI.

In 2026, explainability is no longer optional. It is a gatekeeper for adoption, procurement, and scale.

AI is now embedded across clinical workflows, revenue models, and customer-facing products. As a result, leaders are being asked to justify outcomes in plain language, not model theory.

The adoption curve explains the pressure:

Eighty-seven percent of enterprises now use AI, a 23 percent year-over-year increase, according to Second Talent. As usage expands, so does scrutiny.

Why explainability matters now

- Regulators want traceability, not accuracy alone

- Buyers demand transparency during security and compliance reviews

- Executives need confidence before signing off on AI-driven decisions

What this looks like in practice

i. In healthcare

- Clinicians need to know why a patient is flagged as high risk

- Care teams must justify treatment recommendations

- Audits and reviews require clear reasoning paths, not black boxes

ii. In SaaS

- Enterprise buyers ask how pricing, scoring, or recommendations work

- Sales cycles stall when AI logic cannot be explained

- Trust erodes when outcomes feel arbitrary

How teams are responding

Modern explainable AI is not about oversimplifying models. It is about making behavior observable:

- Feature attribution to show key drivers

- Surrogate models for human-readable explanations

- Counterfactuals that answer “what would change the outcome?”

In 2026, explainability is no longer a feature. It is a prerequisite. If your AI cannot be explained, it will not survive enterprise scrutiny.

D. Federated Learning Makes Privacy a Feature, Not a Tradeoff

“What if you could train better models without ever moving the data?”

That question is pushing federated learning from research labs into real healthcare and SaaS deployments in 2026.

Federated learning allows machine learning models to train across decentralized data sources while keeping sensitive data local. Instead of pulling data into a central warehouse, the model moves to the data, learns, and returns only updated parameters.

Why federated learning is accelerating now

- Privacy regulations are tightening, not loosening

- Data residency rules complicate centralization

- Healthcare data sharing remains fragmented

For many organizations, the old model of “collect everything first” is no longer viable.

What this unlocks

i. In healthcare

- Train models across hospitals without sharing PHI

- Improve predictive accuracy using broader patient populations

- Enable collaboration without compromising patient privacy

ii. In SaaS

- Learn from customer usage patterns without exporting raw data

- Support enterprise buyers with strict data isolation requirements

- Expand analytics without increasing security risk

iii. Where leaders must be careful

Federated learning introduces operational complexity:

- Model updates must be coordinated across nodes

- Data drift can hide inside local environments

- Monitoring and version control become harder

Teams that succeed treat federated learning as an architecture decision, not just an algorithm choice.

Bottom line: federated learning flips the script. In 2026, privacy is no longer a blocker to better models. It is the mechanism that makes them possible.

E. Edge AI Pushes Intelligence Closer to the Moment of Action

“If insight arrives too late, it’s just reporting.”

Edge AI is about where decisions happen, not just how accurate they are. In 2026, more data science workloads are moving out of the cloud and closer to devices, sensors, and users.

The economics support the shift. The Edge AI market is projected to grow from $25.65 to $143B by 2034, a 21 percent CAGR, according to Precedence Research.

Why edge AI is gaining ground

- Latency kills real-time decisions

- Bandwidth costs add up fast

- Always-on connectivity is not guaranteed

Edge AI reduces dependence on centralized infrastructure by running models locally.

Real-world impact

i. In healthcare

- Real-time patient monitoring and early alerts

- Faster clinical response in remote, rural, and acute settings

- Reduced reliance on constant cloud connectivity

ii. In SaaS and IoT-driven platforms

- On-device personalization and recommendations

- Predictive maintenance without cloud round-trips

- Better performance in constrained environments

iii. The tradeoffs leaders must manage

- Models must be smaller and more efficient

- Updates and retraining require careful orchestration

- Security shifts from the cloud to the device

Bottom line: edge AI turns data science into a real-time capability. In 2026, insight delayed is value denied.

F. MLOps Maturity Separates Experiments From Real Businesses

“If your model only works in a notebook, it doesn’t work.”

The gap between teams that build models and teams that run them is widening fast. The difference is MLOps maturity.

Most organizations already have models. Far fewer can deploy, monitor, govern, and retrain them reliably in production. As AI moves into revenue-critical and care-critical workflows, that gap becomes expensive.

Why MLOps is now a leadership concern

- AI failures now impact revenue and patient outcomes

- Manual deployments do not scale

- Regulators expect auditability and control

MLOps turns data science into an operational discipline, not an R&D exercise.

What mature MLOps looks like

i. Across healthcare and SaaS

- Versioned data, models, and features

- Automated testing before deployment

- Continuous monitoring for drift and bias

- Rollbacks when models misbehave

This is how teams move from “we built a model” to “we trust this model.”

ii. Healthcare reality

- Models must align with clinical workflows

- Retraining must not disrupt care delivery

- Audit trails are mandatory, not optional

Without MLOps, even accurate models stall at the pilot stage.

iii. SaaS reality check

- AI features ship faster than governance catches up

- Customer-facing failures damage trust quickly

- Platform teams need repeatable deployment patterns

MLOps gives SaaS leaders a way to scale intelligence without chaos.

Data science success is measured by uptime, reliability, and accountability. MLOps maturity is what makes AI safe to scale.

G. Data Visualization Shifts From Dashboards to Decision Narratives

“If leaders need a walkthrough to understand the chart, the chart failed.”

Data visualization is no longer about prettier dashboards. It is about the speed of comprehension.

Healthcare and SaaS executives are flooded with metrics. The winners are not the teams that show more data. They are the teams that tell a clear story that leads to action.

Why visualization is changing

- Decision windows are shrinking

- Stakeholders are less technical

- AI outputs need human context

Static charts and dense dashboards slow things down. Narrative-driven visualization speeds them up.

What modern data storytelling looks like

Instead of asking leaders to explore dashboards, teams are:

- Framing insights around specific questions

- Highlighting what changed and why

- Making recommended actions obvious

Think fewer filters. More clarity.

Healthcare impact

- Clinicians see risk drivers, not just scores

- Population health teams spot trends without manual analysis

- Executives understand outcomes without statistical deep dives

Visualization becomes a trust layer between models and clinical decision-making.

SaaS impact

- Product teams track feature impact in context

- Revenue leaders understand churn drivers instantly

- Customers see value without needing training

Good visualization shortens sales cycles and aligns teams.

Tools are evolving, but principles matter more

The best teams focus on:

- Clear visual hierarchy

- Minimal cognitive load

- Consistent definitions across views

H. Ethical AI Moves From Policy Decks to Operating Reality

“Ethics only matter when they change how systems behave.”

Ethical AI is no longer a set of principles buried in slide decks. It is being enforced through architecture, process, and accountability.

As AI systems influence care decisions, pricing, eligibility, and access, leaders are being held responsible for outcomes, not intent. Bias, privacy failures, and opaque logic now carry real financial and reputational consequences.

Why ethical AI is operational now

- Regulators expect proof, not promises

- Patients and customers demand fairness

- Enterprises are accountable for AI outcomes

Ethics is no longer abstract. It shows up in audits, contracts, and lawsuits.

What ethical AI looks like in practice

i. Across healthcare and SaaS

- Bias testing is baked into model evaluation

- Clear consent and data usage boundaries

- Continuous monitoring, not one-time checks

Responsible AI is built, not declared.

ii. Healthcare reality

- Biased models can worsen care disparities

- Privacy failures damage patient trust instantly

- Clinical AI must support, not override, clinical judgment

Ethical guardrails protect both patients and providers.

iii. SaaS reality

- Enterprise buyers now ask about fairness controls

- AI missteps stall deals and trigger churn

- Governance influences brand credibility

Teams that invest early avoid costly rework later.

Ethical AI is not a moral stance. It is a risk management strategy and a trust multiplier.

I. Data Science Talent Shifts Toward Hybrid Operators

“The most valuable data scientist in 2026 is not the smartest model builder. It is the one who can ship.”

The talent market is changing fast. In 2026, organizations are no longer hiring data scientists to live in notebooks. They are hiring operators who can move ideas into production.

The demand signal is clear. Data science jobs are projected to grow 36 percent by 2033, with average salaries around $165,000, according to Coursera.

What leaders now look for

Pure technical depth is not enough. High-impact data science talent blends:

- Statistical and ML fundamentals

- Software engineering discipline

- Domain knowledge in healthcare or SaaS

This hybrid profile reduces handoffs and speeds delivery.

Healthcare talent reality

- Data scientists must understand clinical context

- Models must align with care workflows and operational realities

- Communication with clinicians matters as much as accuracy

Teams that ignore domain fluency struggle with adoption.

SaaS talent reality

- Data scientists ship features, not reports

- Collaboration with product and platform teams is mandatory

- Reliability and uptime matter as much as performance

The best hires think like product owners.

Implications for leaders

- Reskill existing teams instead of chasing unicorns

- Invest in MLOps and tooling to reduce talent bottlenecks

- Measure success by impact, not model complexity

J. Data-Driven Decision-Making Becomes the Default, Not the Goal

“The real risk in 2026 is not having bad data. It is making decisions without it.”

Data-driven decision-making is no longer a maturity milestone. It is the baseline expectation.

Boards, regulators, and enterprise customers now assume that major decisions are backed by evidence, not intuition. Leaders are expected to show not just what they decided, but why the data supported it.

Why is this shift irreversible?

- AI outputs influence high-stakes outcomes

- Decisions are audited after the fact

- Speed without evidence creates risk

Data science has moved from insight generation to decision justification.

What this looks like in practice

Organizations are using data science to:

- Forecast the downstream impact of decisions

- Compare scenarios before acting

- Reduce bias and gut-driven variance

The focus is not perfection. It is defensibility.

Healthcare impact

- Evidence-backed care pathway changes

- Data-supported resource allocation

- Reduced reliance on anecdotal clinical decision-making

This improves consistency and outcomes.

SaaS impact

- Pricing and packaging backed by usage data

- Roadmap decisions tied to measurable signals

- Revenue forecasts grounded in behavior, not hope

- Data-driven teams move faster because they argue less.

Ready to turn data science into a dependable growth engine?

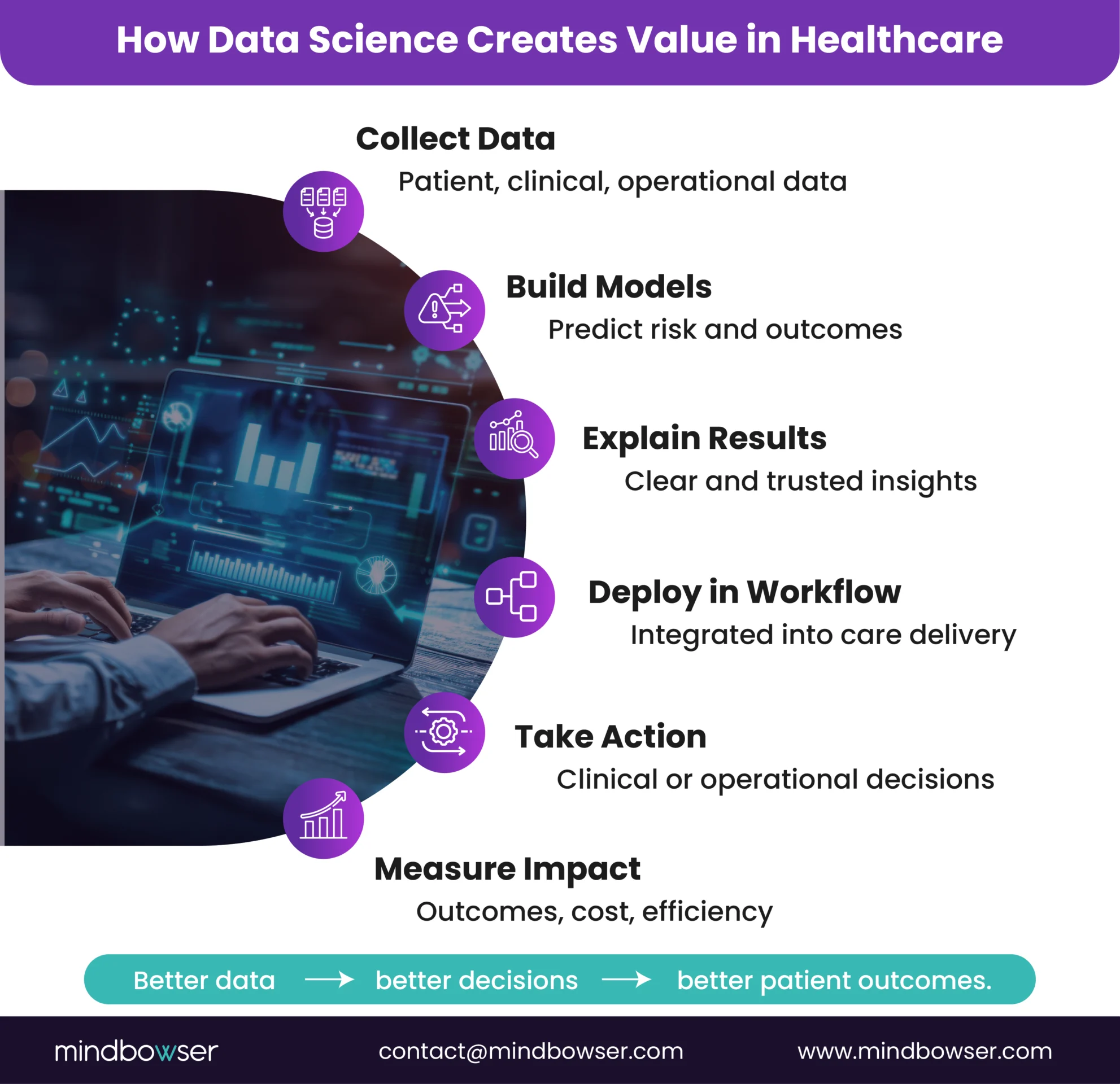

II. Where Data Science Is Delivering Real Value in Healthcare Today

By 2026, data science will no longer prove its relevance. It is proving its return. Across healthcare, leaders are moving past pilots and into repeatable use cases that show measurable impact on cost, outcomes, and care delivery.

The most successful organizations are not chasing every new technique. They are applying data science where decisions are frequent, stakes are high, and feedback loops are clear.

Common high-impact applications include:

- Healthcare: risk stratification, readmission prediction, care gap identification, clinical workflow optimization

- SaaS: churn prediction, pricing intelligence, product usage analytics, customer segmentation

- Operations: demand forecasting, resource allocation, anomaly detection, cost control

Well-known examples reflect this shift. IBM Watson Health applies data science to support clinical decision-making and research. Flatiron Health uses real-world oncology data to improve cancer outcomes. Amazon continues to refine personalization and demand forecasting at scale using advanced analytics.

The pattern is consistent. Value comes not from complexity, but from alignment between data science, business goals, and day-to-day operations.

III. Ethics and Responsible AI in Practice

As data science becomes embedded in clinical care and core SaaS products, ethics and responsibility move from theory into day-to-day operations. In 2026, organizations are judged less by what they promise and more by how their systems behave in real situations.

Responsible AI is not about slowing innovation. It is about making sure models are accurate, fair, explainable, and safe to use at scale.

What responsible data science looks like in practice:

- Bias awareness and mitigation: actively testing models for unfair outcomes and correcting them before deployment

- Privacy and data protection: limiting data access, enforcing consent, and designing systems that respect regulatory boundaries

- Transparency and accountability: ensuring decisions can be explained, audited, and challenged when needed

In healthcare, responsible AI protects patient safety, trust, and clinical integrity. In SaaS, it reduces customer risk and strengthens long-term relationships. Across both, it prevents small technical issues from becoming large reputational or legal problems.

Ethical data science is no longer a side initiative. In 2026, it is part of how mature organizations build and operate intelligent systems.

IV. How Mindbowser Helps Teams Apply Data Science in 2026?

Turning data science into business value requires more than models and tools. It requires systems that fit real workflows, meet compliance standards, and scale without constant rework. That is where most organizations struggle.

Mindbowser works with healthcare organizations and digital health teams to design and build production-grade data science solutions, not demos. Every engagement focuses on outcomes, reliability, and long-term ownership.

How Mindbowser supports data science teams:

- Custom-built solutions: no off-the-shelf shortcuts, architectures designed around your data and use cases

- Healthcare-ready by design: HIPAA-aware systems with security and compliance built in from day one

- Faster paths to production: accelerators that reduce build time without sacrificing control

- Full ownership: clients retain 100 percent IP and control of their data and models

From predictive analytics to AI-driven workflows, Mindbowser helps healthcare organizations move from insight to action without losing compliance, trust, or clinical alignment.

What 2026 Demands From Data Science Leaders?

By 2026, data science will no longer be about proving potential. It is about delivering results that stand up to scrutiny. Leaders are expected to move faster, explain outcomes clearly, and operate within tighter regulatory and trust boundaries.

Healthcare organizations that win treat data science as core infrastructure. They invest in production readiness, ethical safeguards, and teams that can bridge analytics and execution. Those that do not will continue to pilot, pause, and restart.

If you are planning your next phase of growth, now is the time to assess whether your data science efforts are built to scale or still stuck in experimentation.

Data science is the practice of using data, statistics, and machine learning to answer business questions and guide decisions. In healthcare and SaaS, it helps organizations predict outcomes, reduce risk, and improve performance using evidence rather than intuition.

In 2026, data science is operational and regulated. Models are expected to run in production, integrate with real workflows, and meet compliance and explainability standards. The focus has shifted from experimentation to reliability, governance, and measurable impact.

The most impactful trends include explainable AI, federated learning, MLOps maturity, and edge AI. These trends support better clinical trust, privacy protection, real-time decision-making, and scalable deployment in regulated environments.

Explainable AI helps teams understand and defend model-driven decisions. In healthcare, it supports clinical trust and regulatory audits. In SaaS, it builds customer confidence and reduces friction during enterprise sales and security reviews.

Beyond machine learning, teams need skills in software engineering, MLOps, domain knowledge, and communication. The most valuable data scientists can deploy, monitor, and maintain models, not just build them.

Ethical AI reduces legal risk, protects brand reputation, and increases adoption. Addressing bias, privacy, and transparency early prevents costly rework and helps organizations scale AI responsibly.

Yes. With the rise of AutoML and cloud-native tooling, mid-market healthcare and SaaS companies can deploy data science without large in-house teams. Success depends on choosing the right use cases and building for production from the start.

Mindbowser designs and builds custom, production-ready data science solutions for healthcare and SaaS companies. The focus is on compliance-aware architecture, faster time to production, and long-term ownership of data and models.