Common Production Failures: What Goes Wrong and Why

When developers talk about PHI leaks in healthcare applications, the conversation often jumps straight to hackers, breaches, and ransomware.

In reality, most PHI leaks in MERN applications don’t come from attackers at all they come from ordinary development decisions made during debugging, logging, monitoring, and scaling.

MERN apps are fast to build, flexible, and developer-friendly. That same flexibility is exactly what makes them dangerous in healthcare if PHI boundaries are not intentionally designed.

This blog walks through real-world PHI leakage points in MERN applications, focusing on how data actually flows in production systems not ideal architectures.

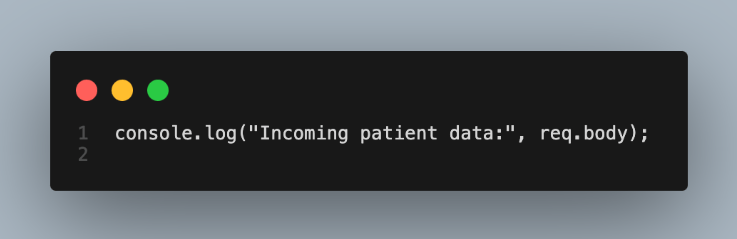

Console Logs: The Most Common PHI Leak You’ll Never Notice

Every MERN developer uses console.log. It’s usually the first debugging tool we reach for, especially when handling complex request payloads like patient intake forms or medical records.

The problem begins when developers log entire request bodies for convenience.

At runtime, req.body often contains names, dates of birth, medical conditions, insurance details, or identifiers. That data doesn’t just appear briefly in your terminal — it gets persisted in cloud logs, aggregated by log management systems, and sometimes retained for months or years.

What makes this especially dangerous is that logs are typically more widely accessible than databases. Support engineers, DevOps teams, and even third-party vendors often have log access. Very few of those people are authorized to see PHI.

The fix is not “log less,” but log smarter. Metadata such as request IDs, field names, or record counts is almost always enough. If you ever feel the urge to log raw payloads in healthcare code, that’s usually a sign your observability design needs improvement.

API Error Responses: When Debugging Becomes Data Exposure

Error handling is another silent PHI leak that shows up repeatedly in MERN codebases.

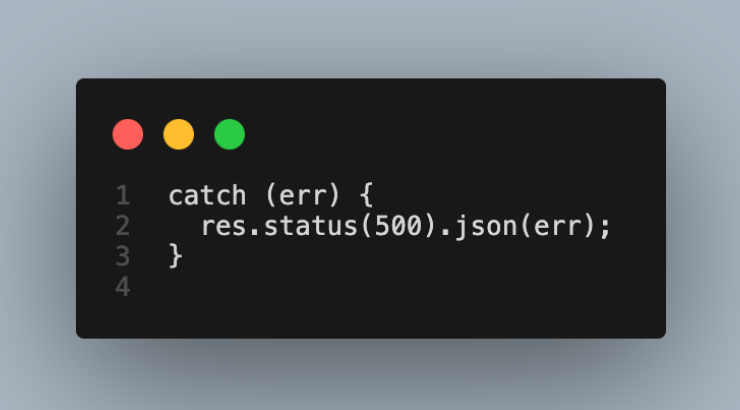

A common pattern looks harmless:

Unfortunately, error objects often contain contextual data values that caused validation failures, database conflicts, or schema mismatches. In healthcare systems, those values are frequently PHI.

Once returned in an API response, this data is exposed to:

- Browser network logs

- Frontend error boundaries

- Monitoring tools

- Screenshots shared with support

Even worse, frontend applications sometimes log error responses again, multiplying the leak.

A safe healthcare API treats error responses as public contracts, never as debugging tools. Internal errors should be logged securely on the server, while clients receive only sanitized messages and correlation IDs.

Frontend Analytics and Monitoring: The Leak You Didn’t Intend

Modern React applications are heavily instrumented. Analytics, error tracking, session replay, and performance monitoring tools are often added early in development sometimes before compliance is even considered.

The danger is that many of these tools automatically capture:

- Input values

- DOM state

- Redux or form state

- Network payloads

If a patient types their SSN, diagnosis, or medication into a form, that data may be recorded verbatim by session replay or analytics tools unless explicitly masked.

This is one of the most overlooked PHI leakage points because nothing looks broken. The app works perfectly it’s just quietly exporting sensitive data to third-party systems.

In healthcare-grade React apps, telemetry must be treated as a data pipeline, not a utility. Sensitive pages require masking, selective tracking, or complete exclusion from analytics altogether.

MongoDB Backups and Snapshots: PHI That Escapes the Database

Most teams focus on securing their production database, but far fewer think about what happens after the data is copied.

MongoDB backups and snapshots are routinely:

- Restored locally for debugging

- Shared across environments

- Stored in lower-security storage tiers

Once production PHI exists in non-production environments, compliance boundaries collapse. Developer laptops, test servers, and CI pipelines are rarely secured to healthcare standards.

The safest healthcare teams treat production PHI as non-exportable. Non-production environments rely on synthetic or masked data, and any backup process is audited just as carefully as live database access.

Need Help Building Secure Healthcare Software? Connect With Our Digital Health Development Team.

Infrastructure-Level Logging: When the Platform Leaks for You

Even if your Express app avoids logging PHI, your infrastructure might not.

Reverse proxies, API gateways, and load balancers often log request bodies by default. These logs exist outside your application code and are easy to forget about during compliance reviews.

This creates a dangerous illusion: developers believe they’ve removed all sensitive logs, while PHI continues to be captured one layer below.

Healthcare systems must define PHI boundaries at every layer, including infrastructure. Request bodies should never be logged at gateways, and redaction should happen as early as possible in the request lifecycle.

Email and Notification Systems: PHI in the Inbox

Emails are convenient. That’s exactly why they’re dangerous.

It’s common to see code that includes patient details in notification emails for operational convenience. Once PHI enters an email system, it becomes nearly impossible to control:

- Emails are forwarded

- Stored indefinitely

- Accessed from personal devices

Email systems are rarely audited to the same level as healthcare applications themselves.

A safer approach is to treat email as a signal channel, not a data channel. Notifications should reference records indirectly using IDs or links, never embed sensitive details directly.

Frontend State Persistence: PHI That Never Gets Deleted

React developers often persist form state to improve user experience auto-save drafts, recover from refreshes, or support multi-step workflows.

When this state includes PHI and is stored in localStorage or sessionStorage, it creates long-lived exposure:

- Shared computers

- Cached browser profiles

- Malware or extensions

- Accidental screenshots

In healthcare applications, PHI should be treated as memory-only data on the frontend. Once the user navigates away, the data should be gone.

Background Jobs and Queues: PHI in Motion

Background workers are essential in scalable MERN systems. Unfortunately, they’re also a frequent PHI leak point.

Passing full patient objects into queues means that:

- Payloads are stored

- Retries duplicate data

- Dashboards display raw content

If the queue system logs or persists job payloads, PHI spreads across yet another system boundary.

The safer pattern is simple but strict: background jobs should pass identifiers only, never full records.

Test Data and Mock Files: When “Fake” Data Isn’t Fake Enough

Many teams underestimate the risk of test data. Using realistic names, dates, and conditions feels harmless until repositories are shared, CI logs are exposed, or test fixtures are reused elsewhere.

In healthcare, even test data must be obviously synthetic. There should be no ambiguity about whether data represents a real person.

Clear conventions and tooling help enforce this discipline across teams.

Screenshots and Support Workflows: The Human Factor

No matter how well systems are designed, humans will take screenshots. Support teams will ask for them. Bugs will be reported visually.

If PHI is fully visible in the UI, screenshots become yet another uncontrolled distribution channel.

Mature healthcare applications assume screenshots will happen and design UIs accordingly masking sensitive fields, limiting visibility by role, and minimizing on-screen exposure whenever possible.

Conclusion

Most PHI leaks in MERN healthcare applications are not the result of sophisticated cyberattacks. Instead, they often arise from everyday development practices such as debugging shortcuts, overly detailed logs, observability tools, infrastructure defaults, and routine human workflows. These small conveniences, while helpful during development, can unintentionally expose sensitive patient data when systems move into production.

The reality is that sensitive data tends to travel farther than expected. Logs, analytics tools, monitoring platforms, backups, and support processes can all become unexpected pathways for PHI exposure. When these systems are added without carefully defining data boundaries, patient information can quietly spread across multiple systems and environments.

Healthcare-grade MERN applications address this challenge by designing data flows intentionally from the start. Instead of relying solely on additional security tools, strong systems focus on minimizing where PHI travels, restricting how it is logged or shared, and building clear boundaries around sensitive data throughout the architecture.