When teams compare orchestration engines, the discussion typically begins with features such as scheduling, retries, UI, and integrations.

That’s useful but incomplete.

Orchestration tools don’t just run workflows. They encode opinions about failure, ownership, and system design.

In this post, we compare Apache Airflow, Kestra, and AWS Step Functions — not by feature checklists, but by the mental models they impose on your architecture.

Orchestration Beyond “Run This, Then That”

Real-world orchestration must handle:

- Distributed systems

- Partial failures

- Long-running processes

- Retries, backfills, and human intervention

Different tools answer these questions in fundamentally different ways — and those differences show up months later, when systems scale or fail.

Three Mental Models of Orchestration

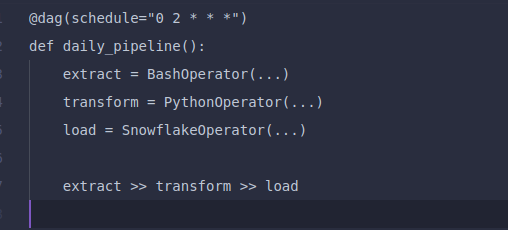

Apache Airflow Orchestration as Code

Apache Airflow treats workflows as Python code that defines a static DAG (Directed Acyclic Graph).

What This Model Optimizes For

- Maximum flexibility

- Python-native logic

- Deterministic batch workflows

Example Workflow

Strengths

- Mature ecosystem

- Familiar with data engineers

- Powerful for predictable pipelines

Trade-offs

- Heavy operational footprint

- Static DAGs limit dynamic behavior

- Failure handling is task-centric

Best fit:

Batch data pipelines owned by data or analytics teams.

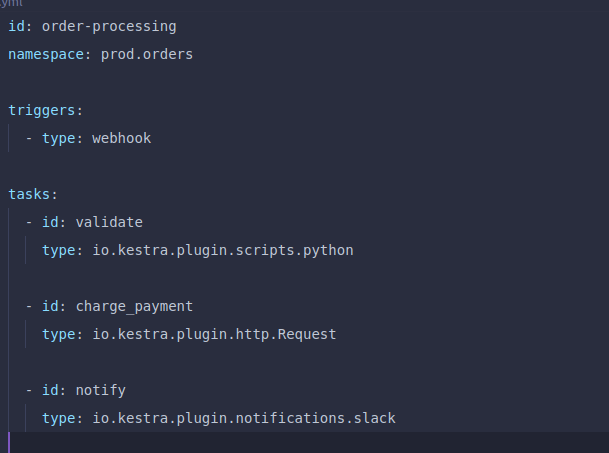

Kestra Orchestration as Events

Kestra focuses on event-driven orchestration with declarative workflows.

What This Model Optimizes For

- Event-driven systems

- Cross-service orchestration

- Explicit failure semantics

Example Workflow

Strengths

- Clear separation of logic and execution

- Strong retry and error handling

- Dynamic workflows are first-class

Trade-offs

- Smaller ecosystem than Airflow

- New mental model for imperative teams

Best fit:

Event-driven architectures orchestrating multiple systems.

Book a Call to Choose the Right Orchestration Engine

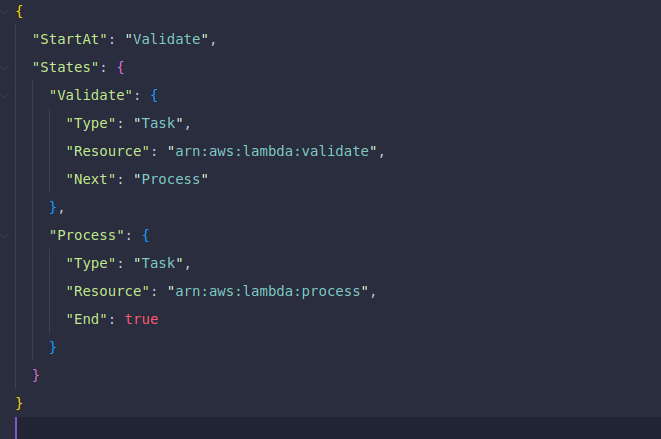

AWS Step Functions Orchestration as Infrastructure

AWS Step Functions model workflows as explicit state machines.

What This Model Optimizes For

- Reliability

- Auditability

- Long-running workflows

Example Workflow

Strengths

- Built-in retries and timeouts

- Excellent observability

- Minimal operational overhead

Trade-offs

- Verbose definitions

- AWS vendor lock-in

- Less flexible outside AWS

Best fit:

AWS-native systems with strong reliability requirements.

Failure Handling: The Real Differentiator

| Tool | Failure Philosophy |

| Airflow | Failures are exceptions |

| Kestra | Failures are workflow states |

| Step Functions | Failures are designed paths |

This difference determines how systems behave under pressure — not on happy paths.

Developer Experience vs Platform Guarantees

- Airflow maximizes flexibility but requires operational maturity

- Kestra balances abstraction and control

- Step Functions prioritize safety and observability

Choosing an orchestrator means choosing where complexity lives.

When Each Tool Starts to Hurt

Airflow struggles when

- Workflows become event-driven

- Dynamic behavior is required

- Infrastructure ownership becomes a burden

Kestra struggles when

- Heavy imperative logic dominates

- Niche integrations are required

Step Functions struggle when

- Portability matters

- Workflow definitions grow too complex

How We’d Choose in Practice

- Airflow → batch data pipelines

- Kestra → event-driven system orchestration

- Step Functions → AWS-native, compliance-heavy workflows

There is no universal winner — only contextual fit.

Conclusion

Choosing between Airflow, Kestra, and AWS Step Functions isn’t about which tool has more features; it’s about which orchestration model matches how your systems fail, scale, and are owned. Each tool encodes a different philosophy: code-first control, event-driven coordination, or infrastructure-level reliability.

The right choice depends on where you want complexity to live over time. Teams that align orchestration with their architecture early avoid brittle workflows, operational drag, and painful rewrites when systems grow.