In the rapidly evolving landscape of artificial intelligence and language models, the art of prompt engineering takes centre stage. Prompt engineering holds the power to guide AI models towards generating accurate and contextually relevant responses. This skilful creation of precise instructions or prompts holds the key to maximizing the performance and efficiency of Language Model (LM) systems.

In this comprehensive blog post, we delve deep into the realm of prompt engineering, unravelling its significance, exploring its techniques, and highlighting its profound impact on achieving superior results from LM models.

Related read: Large Language Models: Complete Guide for 2023

Explore how Mindbowser Healthcare communicates with its innovative platform powered by Open AI’s LLM. The case study delves into the challenges faced in traditional healthcare communication processes and how the collaboration led to the development of a platform that connects healthcare providers with patients seamlessly.

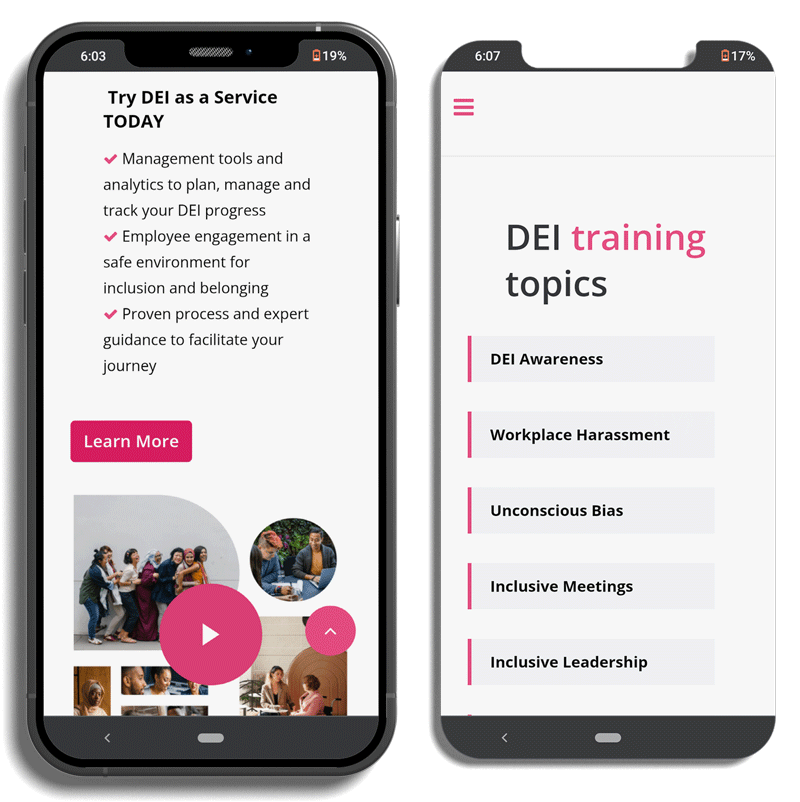

Delve into the journey of a leading DEI Operating System, as it overcomes the challenges within its internal features. Faced with an old user interface and inefficient functionality, the organization recognized the need for modernization to improve user experience and efficiency. Through strategic solutions and innovative enhancements, we navigated these challenges, reshaped office dynamics, and established a more inclusive workplace environment.

Prompt engineering is the backbone of many AI applications, especially in the context of language generation tasks. A well-designed prompt provides crucial context and instruction to the AI model, enabling it to generate relevant and coherent responses. Without proper prompts, AI models may produce inaccurate or irrelevant outputs, leading to suboptimal performance and user dissatisfaction.

By crafting effective prompts, prompt engineers can guide AI models to achieve higher accuracy, reduced bias, and improved task-specific results. As language models become more sophisticated and widely deployed, the role of prompt engineering becomes increasingly vital in shaping the future of AI-powered applications.

Related read: The Beginner Developer’s Guide to Effective AI Prompting

Prompt engineers wield a diverse array of techniques in their toolkits, each technique meticulously tailored to amplify the performance of LM models. These techniques offer nuanced strategies to guide AI models effectively based on the specific demands of the task at hand.

Instructional prompts function as clear and concise directives to the AI model, outlining the specific task it is expected to execute. These prompts leave little room for ambiguity, setting a strong foundation for the AI model’s understanding and response generation. They provide a sense of purpose and clarity that guides the model’s thought process.

For instance: “Translate the following English text into French: ‘Hello, how are you?'”

Conditional prompts introduce an element of context and flexibility to the AI model’s responses. They allow the model to adjust its output based on specific conditions or input cues, enhancing the model’s adaptability and responsiveness to varying scenarios. This technique enables the AI model to simulate personalized interactions.

For example: “If the input text mentions ‘sunny,’ respond with ‘Enjoy the weather!’ If it references ‘rainy,’ respond with ‘Don’t forget your umbrella!'”

Multiple-choice prompts inject an element of decision-making into the AI model’s responses. By presenting a set of options, the model is tasked with selecting the most suitable response from the provided choices. This technique transforms the interaction into a dynamic exchange, where the model’s choice reflects its understanding.

For instance: “What is the capital of France? (a) Paris (b) London (c) Berlin”

Fill-in-the-blank prompts challenge the AI model to complete an incomplete sentence or phrase. This prompts the model to not only understand the context but also predict missing words. This technique assesses the model’s ability to comprehend and generate relevant content within a given context.

For example: “The first man on the ____ was Neil Armstrong.”

Example-based demonstrations provide the AI model with tangible examples of desired outputs. This technique guides the model to generate responses similar to the provided examples, encouraging it to align its output with established standards. It promotes consistency and coherence in responses.

For example: “Here are positive customer reviews. Generate responses akin to these for the given customer queries.”

Contextual prompts harness the context of prior interactions to generate responses that are contextually aligned. By referencing earlier parts of the conversation, the AI model produces responses that maintain consistency and relevance within the ongoing dialogue. This technique mirrors human conversational continuity.

As an illustration: “Based on the preceding conversation, address the query: ‘What time does the restaurant open tomorrow?'”

Reinforcement learning prompts introduce a feedback-driven approach to improve the AI model’s responses over time. By associating responses with rewards, the model learns to enhance its outputs based on the desired outcomes. This iterative technique fosters continuous improvement.

For example: “The AI model receives higher rewards for accurate responses to medical diagnosis queries.”

Adversarial prompts challenge the AI model’s behaviour by probing its limitations, biases, and vulnerabilities. By crafting prompts designed to provide incorrect responses, prompt engineers gain insights into areas that may require further refinement. This technique serves as a stress test to enhance robustness.

For example: “Construct a prompt that is likely to provide an incorrect response from the AI model.”

The journey of prompt engineering is characterized by iteration and evolution. Prompt engineers embark on a continuous process of evaluation and refinement, driven by the goal of optimizing AI responses.

A big part of this process involves carefully checking the results from the AI model to make sure the prompts are working well and doing what they’re supposed to do. This ongoing cycle of checking and improving is really important for prompt engineering to get better and keep growing.

Prompt engineering emerges as a cornerstone of AI development, wielding influence over the precision, efficiency, and fairness of language models. Through a meticulous interplay of diverse prompt techniques, prompt engineers unleash the latent potential of AI models. This empowerment allows AI models to decipher user intents and generate responses that resonate with accuracy. As the ripple effects of AI technology reverberate across industries, prompt engineering remains the driving force behind intelligent applications.

It elevates human-AI interactions to new echelons, forging a path where AI technology seamlessly integrates into our daily lives, enhancing experiences, and nurturing a harmonious coexistence. The intricate symbiosis between prompt engineering and AI models holds the key to shaping a future where AI stands as a collaborative partner, augmenting human potential and propelling the frontiers of artificial intelligence to unprecedented heights.

Hirdesh Kumar is a Full-Stack developer with 2+ years of experience. He has experience in web technologies like React.js, JavaScript, HTML, and CSS. His expertise is building Node.js-integrated web applications, creating REST APIs with well-designed, testable and efficient and optimized code. He loves to explore new technologies.

We worked with Mindbowser on a design sprint, and their team did an awesome job. They really helped us shape the look and feel of our web app and gave us a clean, thoughtful design that our build team could...

The team at Mindbowser was highly professional, patient, and collaborative throughout our engagement. They struck the right balance between offering guidance and taking direction, which made the development process smooth. Although our project wasn’t related to healthcare, we clearly benefited...

Founder, Texas Ranch Security

Mindbowser played a crucial role in helping us bring everything together into a unified, cohesive product. Their commitment to industry-standard coding practices made an enormous difference, allowing developers to seamlessly transition in and out of the project without any confusion....

CEO, MarketsAI

I'm thrilled to be partnering with Mindbowser on our journey with TravelRite. The collaboration has been exceptional, and I’m truly grateful for the dedication and expertise the team has brought to the development process. Their commitment to our mission is...

Founder & CEO, TravelRite

The Mindbowser team's professionalism consistently impressed me. Their commitment to quality shone through in every aspect of the project. They truly went the extra mile, ensuring they understood our needs perfectly and were always willing to invest the time to...

CTO, New Day Therapeutics

I collaborated with Mindbowser for several years on a complex SaaS platform project. They took over a partially completed project and successfully transformed it into a fully functional and robust platform. Throughout the entire process, the quality of their work...

President, E.B. Carlson

Mindbowser and team are professional, talented and very responsive. They got us through a challenging situation with our IOT product successfully. They will be our go to dev team going forward.

Founder, Cascada

Amazing team to work with. Very responsive and very skilled in both front and backend engineering. Looking forward to our next project together.

Co-Founder, Emerge

The team is great to work with. Very professional, on task, and efficient.

Founder, PeriopMD

I can not express enough how pleased we are with the whole team. From the first call and meeting, they took our vision and ran with it. Communication was easy and everyone was flexible to our schedule. I’m excited to...

Founder, Seeke

We had very close go live timeline and Mindbowser team got us live a month before.

CEO, BuyNow WorldWide

If you want a team of great developers, I recommend them for the next project.

Founder, Teach Reach

Mindbowser built both iOS and Android apps for Mindworks, that have stood the test of time. 5 years later they still function quite beautifully. Their team always met their objectives and I'm very happy with the end result. Thank you!

Founder, Mindworks

Mindbowser has delivered a much better quality product than our previous tech vendors. Our product is stable and passed Well Architected Framework Review from AWS.

CEO, PurpleAnt

I am happy to share that we got USD 10k in cloud credits courtesy of our friends at Mindbowser. Thank you Pravin and Ayush, this means a lot to us.

CTO, Shortlist

Mindbowser is one of the reasons that our app is successful. These guys have been a great team.

Founder & CEO, MangoMirror

Kudos for all your hard work and diligence on the Telehealth platform project. You made it possible.

CEO, ThriveHealth

Mindbowser helped us build an awesome iOS app to bring balance to people’s lives.

CEO, SMILINGMIND

They were a very responsive team! Extremely easy to communicate and work with!

Founder & CEO, TotTech

We’ve had very little-to-no hiccups at all—it’s been a really pleasurable experience.

Co-Founder, TEAM8s

Mindbowser was very helpful with explaining the development process and started quickly on the project.

Executive Director of Product Development, Innovation Lab

The greatest benefit we got from Mindbowser is the expertise. Their team has developed apps in all different industries with all types of social proofs.

Co-Founder, Vesica

Mindbowser is professional, efficient and thorough.

Consultant, XPRIZE

Very committed, they create beautiful apps and are very benevolent. They have brilliant Ideas.

Founder, S.T.A.R.S of Wellness

Mindbowser was great; they listened to us a lot and helped us hone in on the actual idea of the app. They had put together fantastic wireframes for us.

Co-Founder, Flat Earth

Ayush was responsive and paired me with the best team member possible, to complete my complex vision and project. Could not be happier.

Founder, Child Life On Call

The team from Mindbowser stayed on task, asked the right questions, and completed the required tasks in a timely fashion! Strong work team!

CEO, SDOH2Health LLC

Mindbowser was easy to work with and hit the ground running, immediately feeling like part of our team.

CEO, Stealth Startup

Mindbowser was an excellent partner in developing my fitness app. They were patient, attentive, & understood my business needs. The end product exceeded my expectations. Thrilled to share it globally.

Owner, Phalanx

Mindbowser's expertise in tech, process & mobile development made them our choice for our app. The team was dedicated to the process & delivered high-quality features on time. They also gave valuable industry advice. Highly recommend them for app development...

Co-Founder, Fox&Fork